Predicting Ground-Level Scene Layout from Aerial Imagery

Menghua Zhai, Zachary Bessinger, Scott Workman, and Nathan Jacobs

Abstract

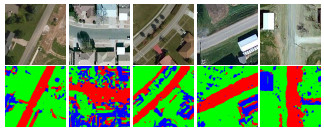

We introduce a novel strategy for learning to extract semantically meaningful features from aerial imagery. Instead of manually labeling the aerial imagery, we propose to predict (noisy) semantic features automatically extracted from co-located ground imagery. Our network architecture takes an aerial image as input, extracts features using a convolutional neural network, and then applies an adaptive transformation to map these features into the ground-level perspective. We use an end-to-end learning approach to minimize the difference between the semantic segmentation extracted directly from the ground image and the semantic segmentation predicted solely based on the aerial image. We show that a model learned using this strategy, with no additional training, is already capable of rough semantic labeling of aerial imagery. Furthermore, we demonstrate that by finetuning this model we can achieve more accurate semantic segmentation than two baseline initialization strategies. We use our network to address the task of estimating the geolocation and geoorientation of a ground image. Finally, we show how features extracted from an aerial image can be used to hallucinate a plausible ground-level panorama.

Downloads

Paper (pdf)BibTeX

@inproceedings{zhai2016predicting,

title = {Predicting Ground-Level Scene Layout from Aerial Imagery},

author = {Zhai, Menghua and Bessinger, Zachary and Workman, Scott and Jacobs, Nathan},

booktitle = {IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2017}

}